6 Keys to Prioritizing Chatbot Deployments

Reading Time: 17 minutes

Overview: This week on digital conversations with Billy Bateman we are joined by Amanda Stevens conversational designer for Master of Code. She walks through 6 keys to prioritizing a use case for conversational AI.

Guest: Amanda Stevens– A charismatic, creative, and driven leader with strong experience in conversation design, Natural LanguageProcessing (NLP) design and optimization. Amanda is passionate about designing AI solutions to educate, engageand inform users. She is a self-motivated individual committed to teamwork and achieving measurable results.

Listening Platforms:

Transcript

Billy: All right everyone, welcome to the show. Today I’m joined by Amanda Stevens, the director of conversation design at Master of Code. Thanks for joining us today Amanda.

Amanda: Thanks so much for having me.

Billy: So before we jump into this topic, which I think is going to be really interesting, tell us a little bit about yourself and about Master of Code and what you guys do.

Master of Code

Amanda: Awesome, exactly how you said I’m the director conversation design. I’ve been doing conversation design for just over a year and a half. I’ve been with the company for two years and initially started out as a key account manager doing strategy with clients. I had this curiosity when we looked at our implementations and what we were putting out there. How are users responding? So, how could we make improvement? How did language and design affect what was happening within the bot experience? It has allowed me to move into conversation design.

It’s such an interesting part of this AI, conversational AI, that is continuing to grow and it’s really cool to be part of that. Master code started 15 years ago as a software development company and moved into mobile apps when they were really moving in the space. Then in 2016 we launched our first conversational solution: a chat bot on Messenger for a luxury retailer, global brand. That’s really great and we continue and have grown that part of our business now doing chat and voice experiences across a variety of channels.

Billy: Awesome, awesome you guys work with brands like T-Mobile and World Surf League.

Amanda: Yeah, all across the board, enterprise level companies. And we also do work with startups as well. They’re super interesting use cases that come from them. They move really quick and they’re quite lean and we love being challenged in that way as well.

Billy: We work with big brands, work with small ones. The small ones, they’re a lot more fun because it’ll just be like yeah we’ll try it, who cares if maybe we get embarrassed.

Amanda: We learn a lot from startups just as much as we learn from our larger clients as well.

Billy: Before we get into the topic which is about prioritizing bots, which is just really interesting. It’s something I think is overlooked. If we were going to look up Amanda on LinkedIn and Facebook and all of that and try to figure out who this Amanda Stevens person is, what is something that we would not be able to figure out from just looking at you up on the Internet?

Flamer the Flame

Amanda: Sure, well something that comes to mind and it’s just because social networks didn’t exist at the time but kind of a funny fact about me that you wouldn’t find online is in junior high, I was my high school’s mascot. I was called flamer the flame. We were the flames and essentially I looked like a fiery raindrop. That was quite fun. I did retire after two years. I don’t think anyone right away replaced me. It was fun to get the crowd hyped, essentially parents watching their kids.

Billy: That’s fun. No one picked up the torch afterwards?

Amanda: No, I don’t think so. It’s kind of a tough job. It’s a bit intimidating, I imagine, especially if you’re 14 or 15.

Billy: I’m sure, in junior high for sure. Well let’s get into it. Before we get into prioritization, there is the whole designing conversations. We can do a lot of things and we’ve done a lot of podcasts on the best practice for designing a good bot and a good conversation that drives towards an outcome and has a persona. And we have the use case. We aren’t going to do too many fringe cases. It’s driving towards this one thing- to build a big bot or to build not a big bot, but a well performing bot.

I want to get into with you, how do we prioritize designing the different bots or conversations that we’re going to have with our customers, with our leads, with anybody when we’re looking at the bot? One of the things you brought up to me was hey these decisions should be driven by data. When you start looking at what bots we’re going to build for clients, where do you go to find that data?

Finding Data to Build the Bot

Amanda: To step back a little bit, you’re exactly right. There is so much content out there about how to design, best practices around, language to use for live agent handover, how really good error state messages are, which is great. I love sharing insights on that as well, but something we’re seeing that’s quite overlooked is the data. Then the data that’s used to inform these cases and to help prioritizes these cases. We have clients come to us and say we really want to build a bot. We want to alleviate, for example, live agent traffic. They’re getting overloaded with requests.

How can we automate that? Maybe the bot is an internal facing chatbot that helps with FAQS within the organization. The stakeholders of the project will come to us and they say “we think the use case should be this or we think it should be that.” Data tells us about our story. I love that quote. I saw it on a blog recently. We want to make sure that what they’re communicating to us, what the client is saying to us is backed by data. If you go off assumptions or if we don’t have that insight that they’re communicating to us, they’re saying we really want a bot that does XYZ.

We need to make sure that we’re on the same page as them, we’re in line with them in order to build a successful solution. If you build a bot where, I’ve seen on other documentation, other blogs, people suggest building a bot that has a use case that’s low priority low code because that way it’s low risk.

If it’s low priority it might not blow up. But I think that’s the wrong message. People aren’t going to use something that’s low priority. You have to address a pain point that people are feeling.

Billy: I think to drive adoption with customers we see the same thing. They’re like “OK let’s start with something really light and it’s not going to get a lot of traffic.” You don’t learn anything if you can’t get the traffic through there, especially if it doesn’t lead towards a measurable outcome whether that’s a lead or solving a support question or booking a meeting whatever it is for each client. If it’s like well we just helped them out. We helped them find what they needed on the website. It’s hard to measure the ROI from that and was it worth everybody’s time?

Amanda: There’s high expectations now. Chatbots have been around for awhile now. I think in the beginning low cost, low priority it was safe because maybe the technology was still new. Companies or agencies like ourselves were feeling things out. Maybe the platforms to launch bots weren’t as sophisticated, but that’s changed. You want to make sure that you’re doing something that’s high priority and it is desired by the end users, it’s viable and it makes sense.

When we think about, like you had asked what data do we look at? It could be from multiple sources. If it’s a customer facing bot we look at tweets. What are they asking the company? What are they saying on social media? If there’s already live agents in place, we look at those transcripts. We make sure they’re scrubbed of course.

And we see commonalities and we plot that into pie charts even just to see the range to see if there’s an influx of questions related to one subject versus the others. We also, if it’s an internal facing bot, if the chat bot for example is appeasing the employees of an organization, there are frequently asked questions. We’ll interview them. We’ll take a select group of people and we’ll ask what’s the current state? What is your pain point? How do you look for this information?

Something that we do have a short code is we often, on site or virtual, in this current climate run workshops. That really allows us to talk with the end users and really map out what is the current state today? Why is it challenging?

Does it make sense to be in an automated solution? Just because something is challenging today doesn’t mean a chat bot or voice bot is going to fix it. It could sometimes mean, and we’ve uncovered this, it may be the program that they wish to take out of the picture and just communicate through chat bot to access data from that program. Maybe the program itself needs extra features. If a bot can’t aggregate data from all these different places, let’s go to the source and figure out that maybe a platform or a program or software piece needs to be optimized.

Billy: That’s a good point. Could you share a little more? I always loved that we learn these things as consultants and building bots. There’s always a story behind it. Maybe the conversational solution isn’t the best thing for this. I don’t know if you have any stories that you could share when somebody came and said “hey we want a bot to do this” and you guys said, “actually you probably don’t.”

Use Cases

Amanda: Sure. Oh gosh, we have quite a few. A project I’m working on right now for a biotech company, the employees have to deal with compliance. They have to deal with so many different programs, SOP’s, documentation reviews. They also have to complete training on the side to make sure that they are in compliance. Part of their job is also, out of everything, make sure they’re compliant and complete training. So they wanted a bot that would connect to their training system and help prioritize for them what training do I need to take first.

If you have 10 trainings, sometimes it’s not by due date. It should be also by urgency. What we uncovered in the workshop, great use case, there’s a need, there’s a pain point. People wanted it, but the platform itself, the training platform where these trainings are, actually had limitations. Even if we put a conversational solution, it wouldn’t be able to pull in that data.

So before investing in a bot, we needed to speak with the technical team and say your training platform needs some work itself before we continue to go down this path. Another example for a client facing bot, they had a good use case. It worked, but the data that they were looking at, they made conclusions about that data when really it was related to how they prioritized it. I’ll give you an example. A big retail brand made their own bot and they chose a use case related to if you hit a certain amount of loyalty points then you get a coupon, essentially.

This only happens to a select group of users, high purchasers. And if they didn’t receive their email with that special coupon for spending so many dollars at their brand and their stores, they could go to the chat bot and kind of troubleshoot and either get a coupon sent to them again or many different scenarios to appease that. They were looking at the data and they saw really low engagement. And they thought maybe our users don’t like using a bot to troubleshoot this issue but when we looked at the data, again there wasn’t that many queries for it because it’s only affecting such a small group of people. They should only go to the bot when they didn’t receive their coupon.

So it’s a support base thing. They made these assumptions, or these conclusions- maybe the bot wasn’t a good idea at all. The technology just wasn’t great. People don’t like talking to the bot about this. But really how many times a year was this affecting this group of people? Maybe once a year. They would, like i said, they’re supposed to get this email. So it was very few and far between when they entered the bot.

Billy: Yeah, that makes sense. We see similar things. “I can’t build a bot for this,” and I’m like how many people are we going to actually get through this? It seems like a low priority thing. We love that they want to use a bot, but it’s not always the best use of everyone’s time. So, you had six key points that you look through when you’re prioritizing building a use case for conversational AI. Can you walk us through your six points and why you’ve chosen those things as you guys have developed your own methodology?

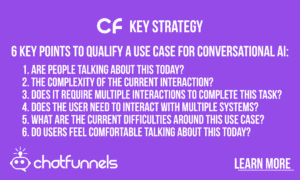

6 Key Points To Qualify a Use Case for Conversational AI

Amanda: Absolutely! Sure. In order for us to kind of come to these six areas, we did a lot of research. Not only what we experienced with their own clients, but we also looked at what Google is doing, what Microsoft is looking at, what Audio is looking at, and we kind of put everything together and came up with these six key points. You would look at these points once you’ve done your data analysis. Once you’ve categorized queries and have a series of use cases that you can potentially build for in this conversational solution.

Number One

So the first thing that we look at: Are people talking about this today? Because if they’re not talking about it today, why would there suddenly be an influx of traffic related to this topic or this use case once you put a bot in place. Does the data exist that people are asking about this? that people are wanting to know more about this topic?

Billy: Let me pause you real quick. Let’s pretend that I’m a big brand and I want you guys to build me a bot. I don’t know exactly what, so how would I figure out if people are talking about this? Maybe it’s like a promotion that we’re running or a new product. Walk through a little bit of an example.

Amanda: Sure! The data that we look at, those transcripts, those posts on social media, even emails to the brand, people will ask us about questions. Maybe it’s related to a product. It could be something if it’s retail, again, do you ship for free or I’m in Australia can you ship it? They’re reaching out to the brand directly because they can’t find the information online or in store or wherever. We look at when they’re reaching out to the brand, what are they asking? Is there a theme or a trend there that can be automated?

Billy: That makes sense. OK keep going.

Amanda: So the next thing we look at is the complexity of the current state. Is the current state a brief interaction? When we think about prioritizing these cases for our conversational solution, do we want to select a use case that’s going to require 20 plus interactions between the bot and the user? The more steps you have to get to where you’re going means there are more chances for errors to occur, for someone to fall out of the flow, maybe not complete the flows. When we’re prioritizing we want to make sure the interactions are brief already or is it a really complex process to get the information we need from the user in order to point them in the right direction?

Billy: Okay, that makes sense.

Number two

Amanda: And then we look at the current state. We always want to understand what the current state is in order to automate that. So we’ll even you know draw it out, look at what are the different programs or platforms, if there’s any integrations. What does that look like currently?

Number Three

Does it require, like I said, multiple interactions within a system to complete this task or to find this answer? Because if it’s, for example, someone just going to the web page on a website and getting their answer, do we really want to build a conversational solution? Is it saving time? How is it appeasing this pain point if they only have to deal with one system? We want to make sure to drive efficiency with automation. A good use case to prioritize is one that requires multiple interactions with the system and multiple systems as well, which is the next step.

Number Four

So multiple interactions was the third one and the fourth is the multiple systems. Because that makes things easy. If a chatbot can pull data from one database in another system or another platform and put it all together, that’s so much easier for a user to get that information digested and point them in the right direction.

Billy: This is one of the things that I really love, that you made this a point something we look at. Whether you’re doing many chatbot your own conversational AI bot or even like drift or intercom, the great thing about all these things is that you have all these integrations. You can pull data from different sources and that chatbot can kind of be a concierge and say “oh let me check on this for you or make sure you you’re in this box.” It’s not highly complex, but just easy things that the person doesn’t need to do anymore. You can have a bot do these things and look at all these systems.

Amanda: Exactly! I’ll bring back the example of the biotech company that wanted to create a bot that prioritizes trading, which we are doing now that the training software has been optimized and features have been added. It’s also going to connect not just with the training system but hey can I make a reminder for me to do that training. It’s going to connect with my Google Calendar and also send me an email. What a great use case! It’s connecting to your email, the training system, and your calendar. You’re making all that happen in one conversational environment.

Billy: Yeah, if a bot just lives on its own with no integrations, it’s not that useful most of the time. The beauty is that it can look at your calendar, it can look into the CRM or it can look into our marketing automation. And see oh yeah you were sent this email. It’s just awesome what we can do with that! So i’ll let you keep going. I know you’ve got two more to go through.

Number Five

Amanda: The last two are around what are the current difficulties around this use case. If we are going to prioritize this, that means there needs to be a benefit of building it in a conversational solution than the current state. So many of these are all very much related to each other, but we do ask the client these six separate questions because it really helps them go through the motion of “Okay what am I trying to make happen? What efficiencies am I trying to drive? Am I saving users time? Am I offering a more seamless solution?” Also the conversational line, which is great, that level of personalization is also great. Let’s look at the efficiencies that are being created with this.

Number Six

Finally, I love bringing this up too because again you get interesting answers from project to project, do users feel comfortable talking or typing about this today? Are these use cases so sensitive that you might not feel comfortable typing it to a bot? Something really really important that sometimes gets overlooked because you just think if you can talk to it maybe a live agent about it today why wouldn’t you be able to talk to a bot, but it is slightly different when you’re talking to that automated system.

Billy: For sure. I think this is a good one as well because we think we can automate everything with the bot. But there are those things that people are not going to be like “okay I’m just gonna give up this information to this bot and I don’t know where it goes,” It doesn’t work for everything, but that’s a great point. You walked us through the methodology. Where do you think people are missing this right now? We’ve got a lot of agencies and consultancies building bots. Out of these six, where do you see people probably making mistakes and not thinking about this?

Mistakes to Avoid

Amanda: Sure! I think, oh that’s a really good question. I think generally a lot of companies and agencies are looking at data. Say a stakeholder says, “we want to focus on this use case.” We’ll do a little bit of due diligence, look at data and say okay this makes sense. I think the efficiency piece that looking at requiring the multiple systems a user needs to interact with, there’s a few chatbot use cases out there on bots where the process of going through the bot is just as fast as going through the current state or the original state. We see brands that want to check this innovation box because conversational solutions are awesome.

They’re super interesting and they’re fun especially if you’re dealing with a really robust one with no buttons or text. You just ask it a question it answers back. That’s really cool but, again, is it efficient? Is it saving time? Is it easier for users to use that rather than what was in place beforehand?

Favorite Part of Working with Bots

Billy: Yeah, I agree. I think sometimes we get caught up and you know whether it’s us as an agency or just a company has bought a bot solution. And they’re like “oh we’ll just swap the bot out!” It’s not always the best thing to do, especially form. Some forms it’s easier to just have the form than the bot. Okay Amanda, well before we wrap up I want to ask you, I know you’re super passionate about conversational design about bots. You’ve been in this for a few years, what do you like best? What’s your favorite thing about working with bots?

Amanda: My favorite thing? I love if we have a workshop for our project, and we always push that, that’s my favorite. It’s a little bit like detective work. You’re coming in and you have an idea of what that business is about, what they sell, what they produce, what they offer. But after meeting with people/those end users potentially or people on the team, you really understand how they speak amongst themselves/what language they use.

I love collecting sample dialogue. If the bot, again, is for employees, they have acronyms. They have terms and slangs and short form words and products that we at Master of Code might not know about. And it’s so cool. We’ll do back to back exercises where a person pretends to be the bot. Then a user is talking to them. We’ll give them the use case that we’re trying to prioritize. Just to watch how the two will interact and the words they use, I think that’s fascinating.

I’ve always been fascinated with what and how language and messaging can be used to turn heads that come from the advertising space. So it was all about how we use messaging in, let’s say a billboard versus how you would use it on a website or a digital ad. How would you modify it to turn heads, to captivate people to drive that engagement? That’s what I love about it. So it’s very much about the language and and uncovering real problems and pain points that AI can help address and solve.

Billy: Awesome, awesome. And then if anyone’s like, hey I’m just getting into conversational design, whether I’m a demand Gen marketer somewhere or I wanna get a job somewhere else you know in an agency that does this. What’s your advice for getting started?

Amanda: Sure. I think there isn’t a degree for conversational design yet. There are a few courses out there. Definitely leverage online courses if you can. But I think the foundation really comes from good design. Spend the time and effort really understanding design, thinking user experience and what user personas are, and how you can build out user journeys.

That is the foundation, I think, of conversation design. Once you have that, user experience overall foundation towards customer experience and how a customer would go through a flow or tackle a website even. Once you have those foundations, conversation design is just another level of that. It’s designing for different channels.

Billy: No you’re totally right. I’ve never thought about that. But I did a couple design thinking classes as part of my MBA. When I first got into this, I was definitely leveraging those without necessarily connecting the dots. That’s a great point. Thanks for coming on! It’s been great! This is really insightful. If people want to connect with you and continue the conversation, what’s the best way for them to reach out?

Contact Information

Amanda: Sure! Thank you so much for having me Billy this has been so fun! Everyone can find me on LinkedIn. Just search Amanda Stevens. I think my vanity URL is Amandastevens1. You can email me as well. It’s amanda.stevens@masterofcode.com.

Billy: Okay Amanda. Thanks and we’ll chat later.

Amanda: Thanks so much!